Case Study: ‘Barbara Walters’ appears on CNN’s ‘New Year’s Eve Live’ from over 2,700 miles away via AR

Weekly insights on the technology, production and business decisions shaping media and broadcast. No paywall. Independent coverage. Unsubscribe anytime.

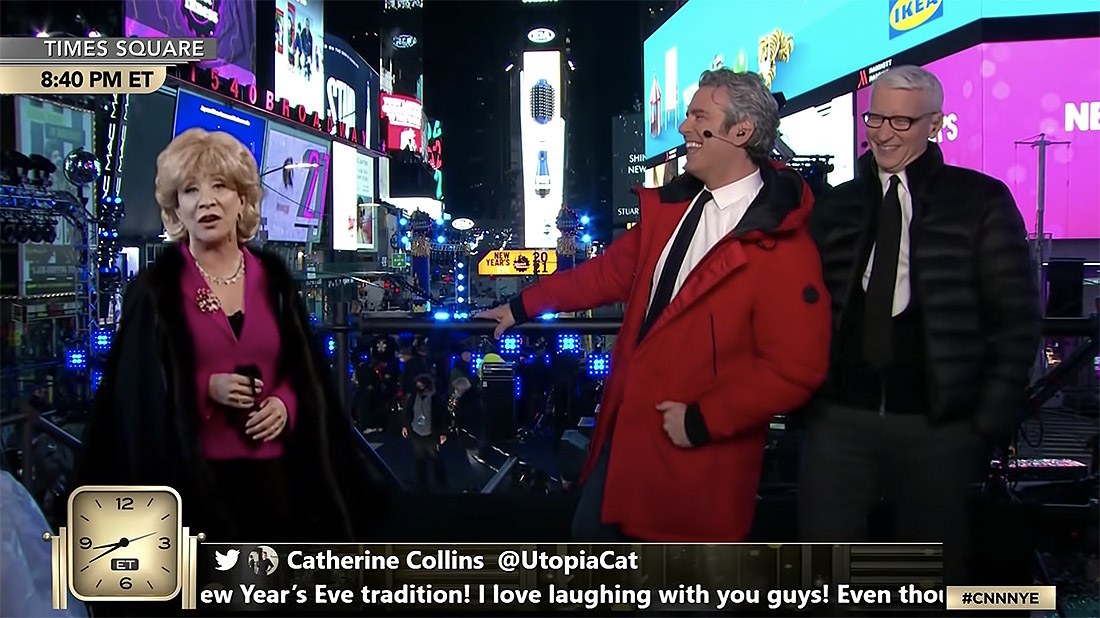

If you never saw the “teleportation” moment, no one would blame you for thinking Cheri Oteri actually showed up to CNN’s “New Year’s Eve Live” this year. Her “Barbara Walters” appearance was so seamless, she looked like just another guest cracking jokes with Anderson Cooper and Andy Cohen in Times Square. In reality, she was clear across the country, riffing in an LA studio with the help of a custom AR system and the team at Silver Spoon Animation.

The special, seen by 3.4 million viewers, was CNN’s biggest NYE audience to date – but it began like most projects in 2020, with an eye on the constraints. The talent and crew had to be protected, for one.

Times Square would be fairly emptied out, for another, which put the majority of the broadcast’s visual intrigue squarely in CNN’s lap. But the carrot was big. With so many people stuck at home, there were more potential viewers than ever before, all of whom were looking for entertainment – and maybe a sense of normalcy – while riding out the pandemic.

“One idea that came up pretty quickly was ‘beaming’ guests in from our LA studio,” said Jason Hochheimer, manager of technical operations and engineering at CNN. “‘New Year’s Eve Live’ is one of our less serious programs, and this way we could maintain the show’s playful nature while keeping our guests safe. It checked all the boxes.”

CNN turned to Silver Spoon, a real-time virtual production studio based in Brooklyn, for the assignment, as they had spent the last few years creating high-end AR for over 150 broadcasts. Unlike other AR productions, though, the project had a unique wrinkle when it came to the camera tracking. The classic 3D environment would be non-existent, replaced instead by live 2D footage that not only needed to line the guest up with the hosts, but also allow for the onsite cameraman to employ a variety of camera moves and focal adjustments without breaking the effect.

“It’s a little effect, but there’s a lot that goes into it,” said Dan Pack, managing director at Silver Spoon Animation. “You have two separate studios, a live production and all of those light sources in Times Square complicating the footage, as well as the delay between coasts. Success meant Cheri would look completely normal to the average viewer, but we had to get it right.”

With only two weeks till air, Silver Spoon quickly customized one of their AR servers for the project, integrating it with CNN’s LA studio remotely so Silver Spoon could manage the effect safely in New York. But while an early link could be established in LA, Times Square was a different story since the CNN crew wouldn’t be able to load in until 4 p.m. on the 31st.

“Everything had to be locked by the time CNN started airing at 8 p.m., which meant our gear had to be incredibly responsive. We had already been using Pixotope to manage AR on our other projects, but this would be one of our first run-throughs with Ncam’s new Mk2 tracking system. With the event being outside, and us not able to be onsite, we needed something that could set up quickly and provide seamless results. Ncam gave us all that flexibility.”

Since CNN had already been employing Ncam tech on their broadcasts for years, the Mk2 fit right in.

“The Mk2 camera bar and server were much smaller and more manageable than we expected,” said Hochheimer. “CNN’s tech manager, Frank Slany was able to mount each piece directly to the main camera tripod in New York without having to outfit the gear at another location. Once calibrated, the camera bar was able to track points directly on the talent platform without becoming adversely affected by the commotion and movement going on in the background. And between the confetti blasts, fog FX, revelers and intermittent rain, there was a lot of movement going on in the background.”

To contend with the various light sources around the platform, Silver Spoon suggested putting down fiducial markers on the floor to give Ncam Reality, Mk2’s software arm, better information for its point cloud. Although the Mk2 doesn’t need external markers, it can accommodate them to provide more flexibility in outdoor situations, making the tracker essential for shoots where lighting variables add high degrees of complexity to an environment.

“Ncam is the only tracker that could have pulled this off in four hours. Anything else would have needed days of setup time, multiple trackers and a lot of extra work to accommodate them,” added Pack. “With Ncam, CNN could set up and shoot outdoors day-of, without us even being there. It’s a wonderfully efficient way to do things, especially in a year like this.”

Once the system was locked, the performers only had to wait for their cue and they could interact with Anderson and Andy from afar, with viewers being none the wiser. Oteri’s performance drew raves for the second year in a row, and had both hosts doubled over in fits of laughter. Not bad for two weeks of work, and another feather in the expanding cap of virtual production.

tags

Anderson Cooper, Andy Cohen, Augmented Reality, Augmented Reality for Broadcast, CNN, Dan Pack, Jason Hochheimer, Ncam, new year's eve, Silver Spoon, teleporter, times square

categories

Augmented Reality, Virtual Production and Virtual Sets, Cable News, Camera Tracking, Case Study, Featured, Voices