Column: Orchestration and workflow – what’s the difference?

Subscribe to NCS for the latest news, project case studies and product announcements in broadcast technology, creative design and engineering delivered to your inbox.

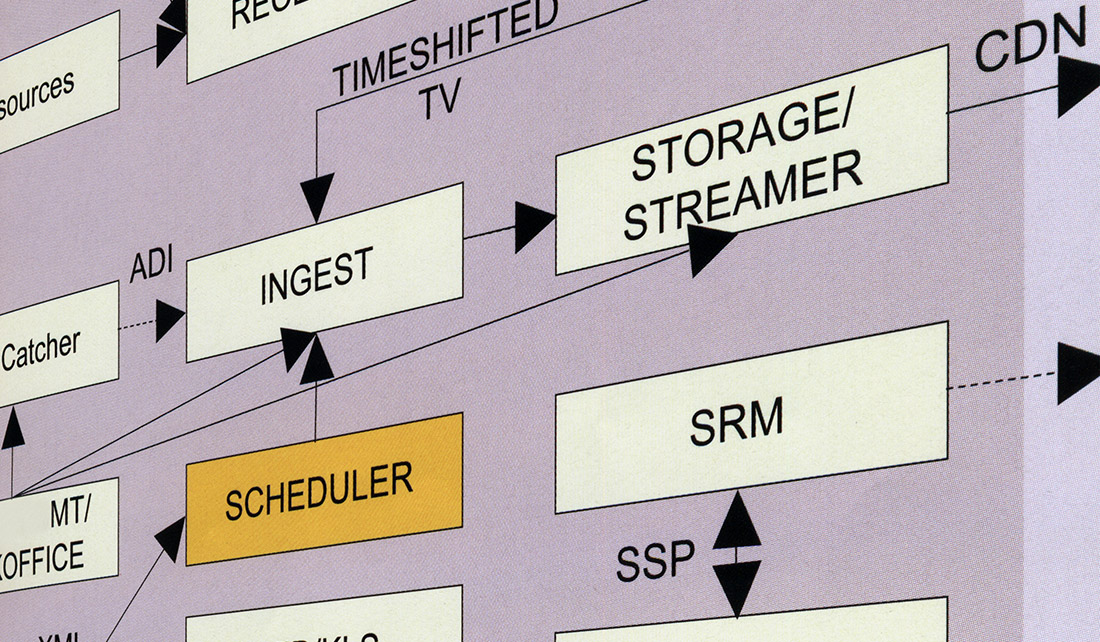

Both get stuff done, but they aren’t the same thing. Workflow links high-level processes together, often using monolithic applications. Orchestration works not only with high-level processes but also with low-level services and even lower-level virtual infrastructure – gauging changing demand and scaling to meet it. In this sense, orchestration can be considered to be an evolution of workflow.

The term “workflow” has been around longer than the term “orchestration.” Essentially, workflow provides the ability to conditionally chain a series of tasks. Workflow assumes that each task and the resources required to carry it out are immediately available. Orchestration, like workflow, provides the ability to conditionally chain tasks, but it is also aware of and controls the technical infrastructure required to support the tasks. Specifically, orchestration can control the technical resources on which tasks will run.

For instance, workflow may be able to pass encoding of a program to different transcode profiles based on metadata. Workflow assumes these resources are available any time they are needed, but it does not control instantiating, starting or stopping the resources.

Orchestration takes workflow to the next level by dynamically controlling the availability of resources – running them up when a task is required and killing them when a task is no longer required. If more resource is needed, orchestration can run more tasks, essentially scaling vertically to meet demand.

| Workflow | Orchestration | |

| Chain one or more tasks | Yes | Yes |

| Conditionally run a task based on metadata | Yes | Yes |

| Emphasis on application and service integration | Yes | No |

| Emphasis on service and container integration | No | Yes |

| Dynamically start any number of task-dependent resources/containers when needed | No | Yes |

| Dynamically stop a task-dependent resource/container when needed | No | Yes |

| Automatically choose the optimal processing environment to run a task when it is needed | No | Yes |

| Dynamically scalable | No | Yes |

Consider this analogy. Both workflow and orchestration might control a group of people playing instruments. The difference between the two is that with workflow all the musicians are present on stage all the time, whether they are currently playing or not. With orchestration, specific musicians are called to the stage from a pool of available musicians only when it is time for their instrument to be played, and leave the stage when they are not playing. If more volume is needed from a particular type of instrument, orchestration can dynamically bring more players of that type into the performance.

From an end user-perspective, workflow and orchestration applications and UIs appear very similar. Both are generally driven through a flow-chart-like user interface where processes, represented as boxes, are connected to each other with lines, and optionally include decision trees. However, in the background, orchestration differs from workflow, in that an orchestration engine is aware of available resources and spins them up and down as required at different stages of the orchestrated workflow. Workflow is generally not scalable and is generally limited to building queues of tasks for sequential execution by a limited number of resources.

The granularity enabled by orchestration means that it generally processes tasks quicker and more efficiently than workflow. In addition, operational efficiency increases further when deploying and spinning down resources as required; generally, with orchestration you only pay for the resources you actually use.

The benefits of orchestration applications over basic workflow applications are apparent, but as with most things, higher efficiency and “better” performance come with a greater price tag. When selecting applications and platforms, it is important to evaluate the cost savings inherent in orchestration against the initial purchase price, and against associated running costs. The initial investment may be greater, but the savings made over a given period may outweigh this initial cost.

Subscribe to NCS for the latest news, project case studies and product announcements in broadcast technology, creative design and engineering delivered to your inbox.

tags

Broadcast Workflow, Cloud Storage, Content Management Systems, MAM Workflow, Masstech, Media Orchestration, media storage, Orchestration, workflow

categories

Broadcast Engineering, Content Delivery and Storage, Featured, IP Based Production, Software, Voices