Adobe’s Paul Saccone on Premiere Pro updates including Generative AI

Weekly insights on the technology, production and business decisions shaping media and broadcast. Free to access. Independent coverage. Unsubscribe anytime.

At the NAB Show 2023, Adobe unveiled its roadmap for infusing artificial intelligence capabilities into Adobe Premiere Pro, including support for third-party AI models.

Paul Saccone, senior director of pro video marketing at Adobe, set down with NewscastStudio to discuss the company’s vision for AI in video production and forthcoming product updates.

Generative AI comes to Adobe Premiere Pro

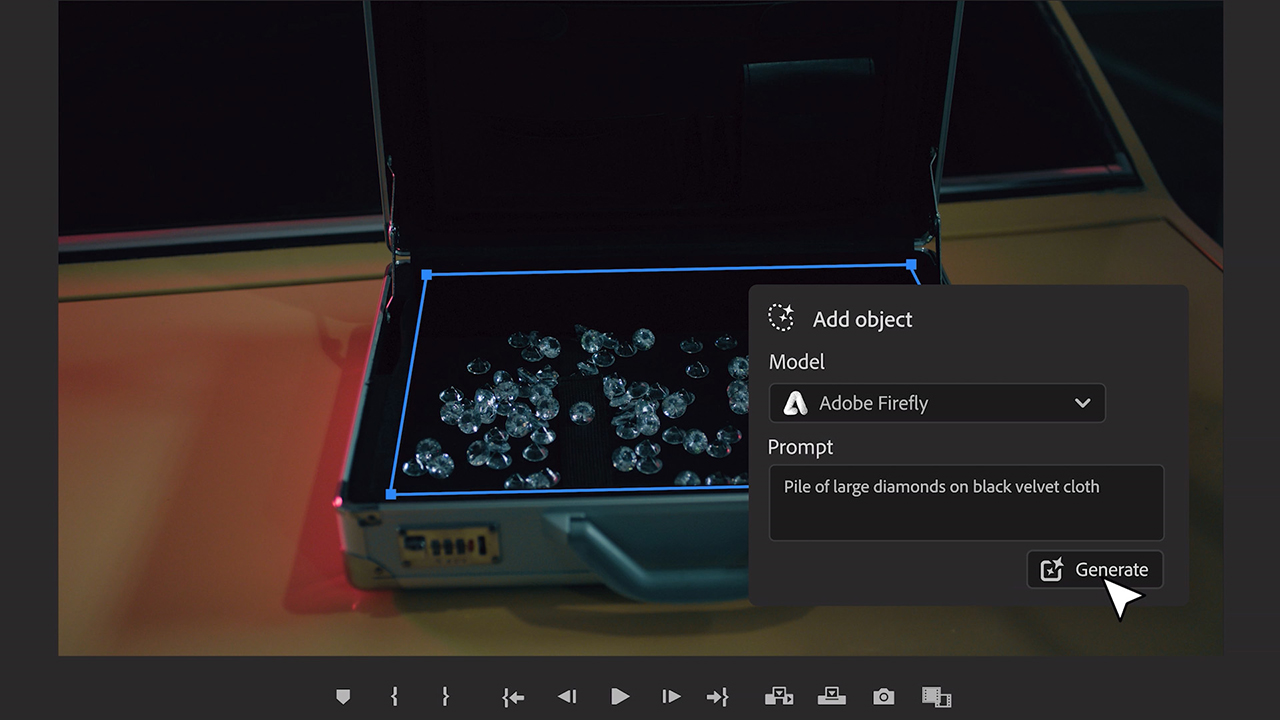

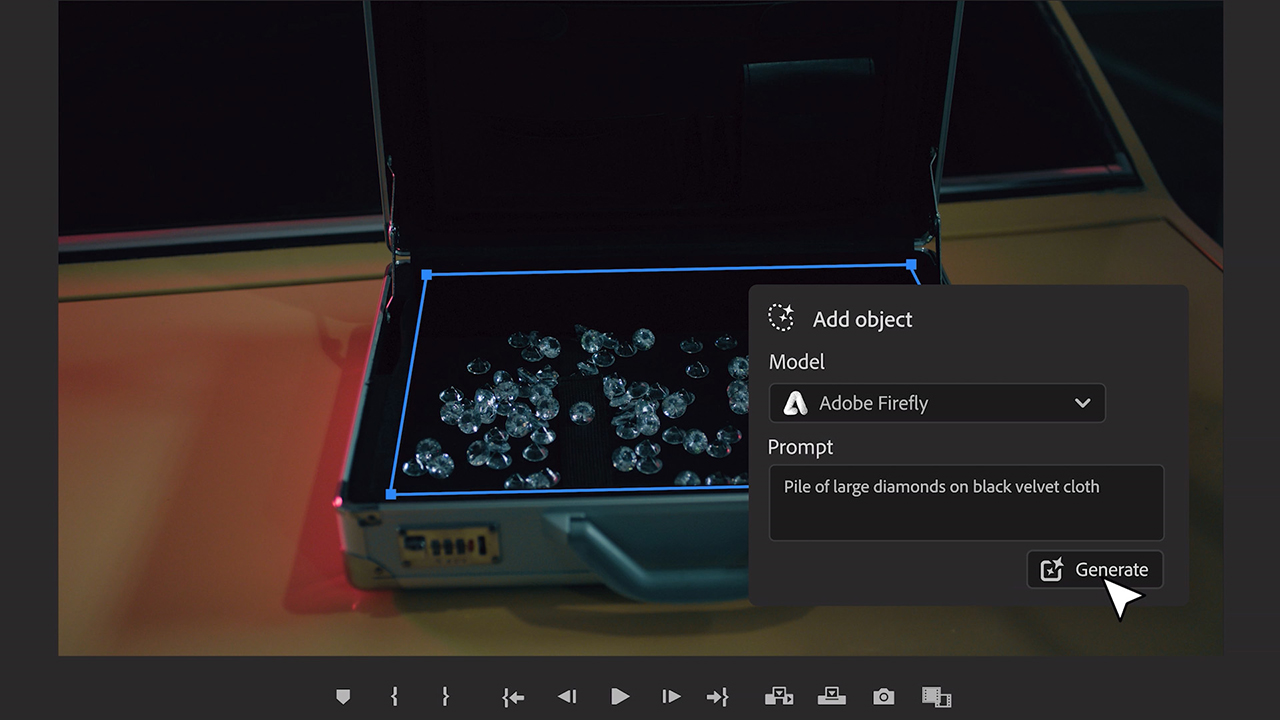

The biggest news from Adobe’s NAB announcements was undoubtedly the news that Firefly, Adobe’s generative AI model, will be integrated into Premiere Pro by year-end. Users can expect an initial feature set to include object addition and removal, all generated by Adobe’s in-development video model.

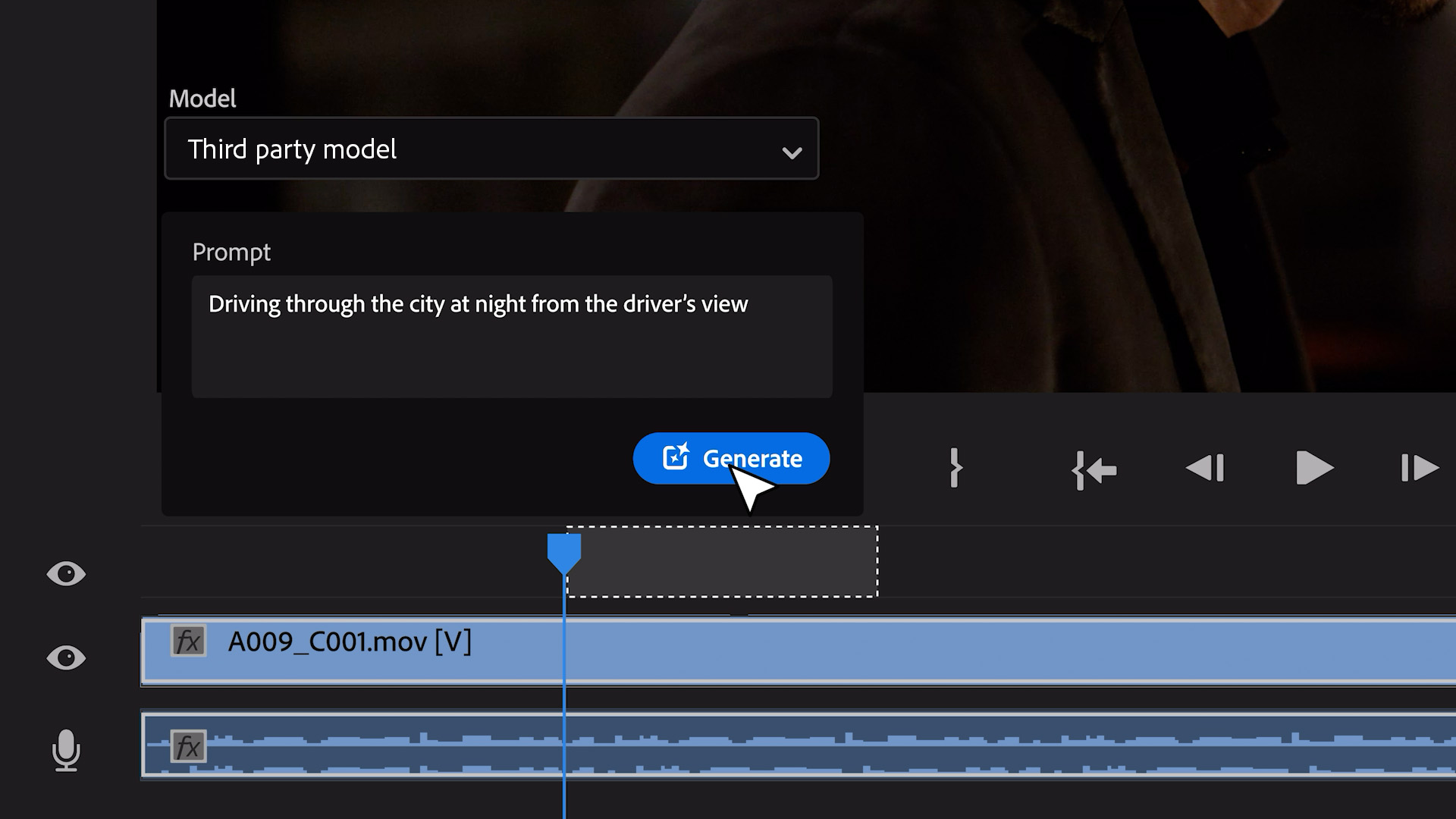

On the roadmap, Adobe plans to include the ability to generate B-roll via text prompts and the ability to extend existing video length.

In Premiere, users will also be able to integrate third-party AI models that may be better tuned for a specific task, such as OpenAI’s Sora, Runway and Pika.

“In addition to our own models, which are all ethically trained and commercially safe, we want to give our customers the creative choice to use whatever they want,” Saccone explained. “It’s important to give customers the creative freedom to work with whatever models they desire.”

While final specs are still being determined, Saccone expressed confidence in delivering a convincing, broadcast-quality result in the initial public beta, with 4K output on the horizon.

“I think the reality with these generative models is that all of them are different, and some are going to be better at horses than diamonds,” said Saccone. “Models will just continue improving and evolving over time.”

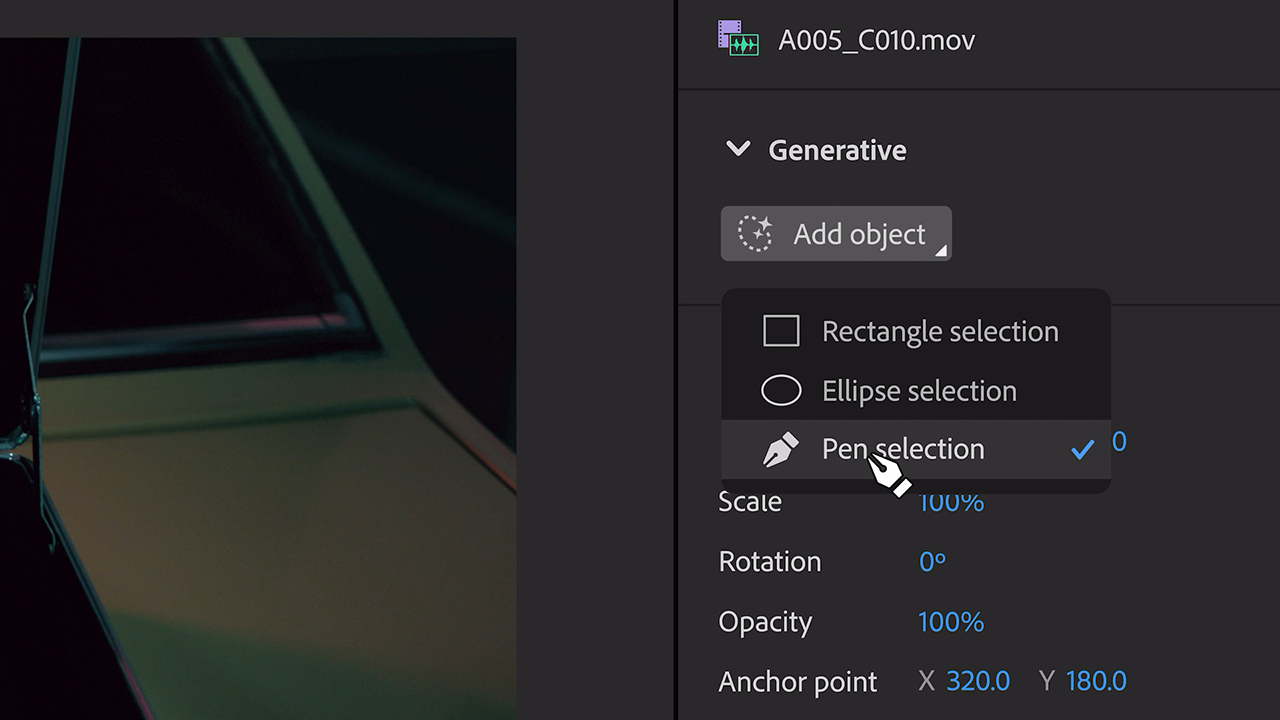

The new AI-powered tools use Premiere Pro’s existing masking and tracking capabilities, which are also being overhauled as part of this update.

“In order to do the objection addition or removal, we have to be able to select regions of a frame and track it over time as it moves. And the tools for doing that in Premiere basically needed to be overhauled,” noted Saccone. “As a prerequisite to the object addition or removal work, we’re overhauling all of our masking tools inside of Premiere as well.”

Workflows for AI in Premiere Pro

“What we found from our customers is that they really don’t want to be taken out of the experience of their creative workflow,” said Saccone.

“So to the extent that we can accommodate that in app, we’d really like to. Much like we have support for third-party plugins, filters and effects in Premiere and Photoshop and in VFX today, it’s really not any different.”

Adobe plans to develop SDKs and APIs to allow vendors to deeply integrate their AI models into Creative Cloud apps, with the goal of providing a seamless, native user experience. Saccone likened the approach to how Adobe currently supports third-party plugins—except now with AI capabilities.

Balancing power and ethics in an AI-powered future

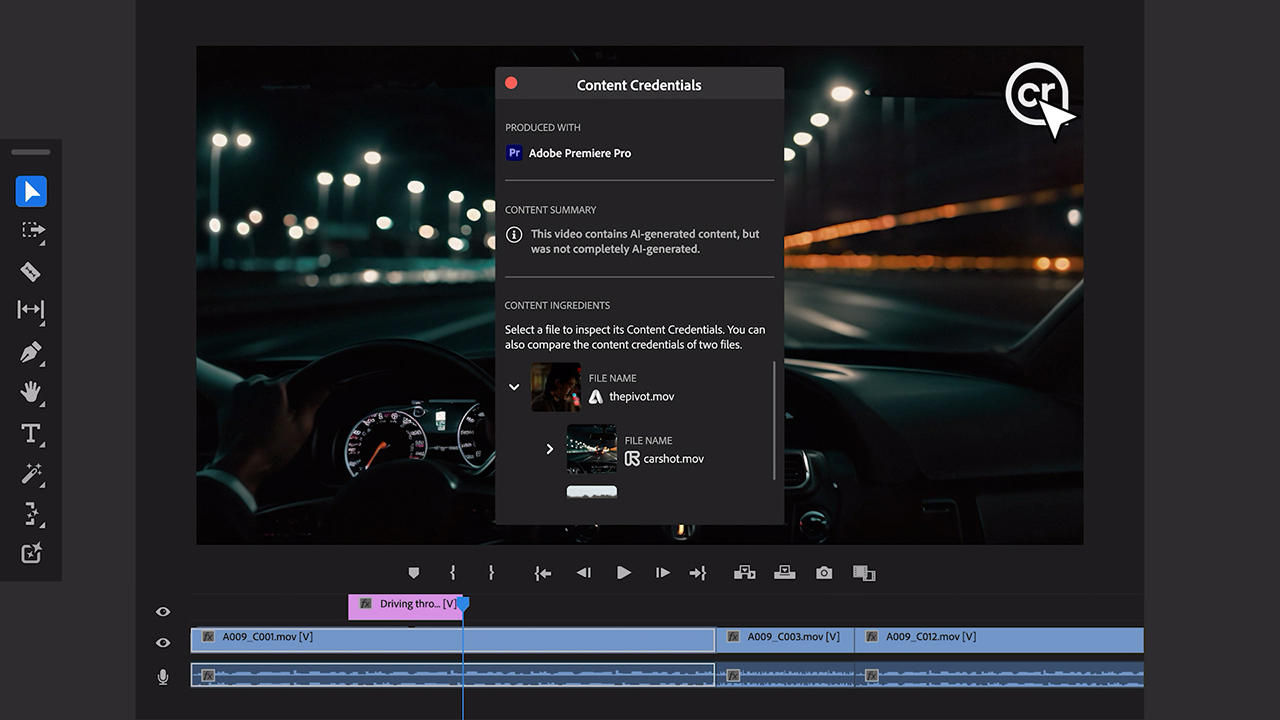

As generative AI advances and manipulated content becomes easier than ever to create, Adobe is working to embed standards of transparency and ethics into its tools.

The company’s Content Authenticity Initiative, first launched in 2019, will play a key role in this.

“It’s a way to embed tamper-evident metadata into a file that tells you how it was created and who evolved it,” explained Saccone. “Our goal is to make sure that everything that comes out of our products is appropriately stamped.”

Adobe is also emphasizing the rigorous standards it applies to training its own AI models, using only content the company owns or public domain assets. However, Saccone acknowledged that those same assurances may not apply to all third-party models that will soon integrate with Creative Cloud.

“I think, over time, as all of this levels out and normalizes in the industry, you’re going to see more and more people adopting things like content authenticity. You’re going to see probably some standards bodies adopting things like CAI as well,” said Saccone. “We hope that’s the case because we really do want it to be pervasive.”

AI buzz aside, the Premiere Pro team had a series of other updates on the NAB Show floor, with After Effects also seeing some major 3D workflow upgrades.

A Premiere Pro public beta released in January and slated for full availability in the coming weeks includes new audio capabilities driven by Adobe’s Sensei AI. Clips can now be automatically tagged as dialog, music, ambient sound or sound effects, enabling editors to access the most relevant tools for each type of audio via a contextual menu.

Looking further into the future, Saccone hinted at the potential for Adobe to bring its flagship video editor into the browser or to develop more focused web-based video tools for creators who may not need Premiere Pro’s full capabilities.

“I think there’s this whole world of people out there that want to edit pro-quality video, but they might not identify as a video editor per se. So maybe they don’t need everything that’s in Premiere but they need something competent and capable. And browser can be a good place to do that,” said Saccone.

tags

Adobe, Adobe Creative Cloud, Adobe Premiere, Adobe Premiere Pro, Artificial Intelligence, Content Authenticity Initiative, Generative AI, NAB Show 2024, NAB Show News, Paul Saccone, video editing

categories

Featured, NAB Show, Video Editing